On March 26, 2026, I stood in a Nashville “mock” courtroom trying a dram shop case to a live jury (and a large audience of experienced trial lawyers with DRI). At the same time, an AI “jury” was doing its own version of the same thing somewhere in the cloud. In this case, the AI jury never saw the trial the humans saw: it decided the case from pre‑trial scripts, while the real jurors reacted to a moving target of shifting testimony, missing evidence, and advocacy choices made in real time. The gap between those two experiences turned out to be the real story. It exposed what AI can and can’t do as a “juror,” why a simple phrase like “It’s the car, not the bar” lands differently with people than with models, and how burden of proof, witness credibility, and even gendered perceptions of trial lawyers are still experienced most directly—and most powerfully—in the human column.

The capabilities and differences between the two approaches turned out to be the most important lesson of the entire exercise.

The case: Bull’s bar, the pedal tavern and the car

The fact pattern was a classic dram shop scenario with a few colorful twists. The plaintiff, Penny, came to Nashville for her bachelorette weekend. She and her friends chose Broadway’s party district, and Bull’s Bar in particular, for the experience: loud music, crowds, and a mechanical bull.

At Bull’s, Penny decided to ride the mechanical bull. Before she did, she read and signed a written waiver, in her words, “of course—I’m a lawyer, I read everything.” She acknowledged the risk of being thrown and injured, signed anyway, rode, and was thrown off. She hurt her ankle.

Faced with a swollen, painful ankle, she had choices. She could have gone back to the hotel, gone to an ER, or called it a night. Instead, she iced the ankle, stayed at the bar, and then followed the original plan: a pedal tavern ride to the next stop. No seatbelts, no harnesses, open seating on busy streets—that was part of the “iconic Nashville” appeal.

While she was on the pedal tavern, a drunk driver named David crashed into it and her. That collision caused a fracture in her lower leg. The ankle injury from the bull was treatable with a boot; the leg fracture required a scooter or wheelchair and was the reason she could not walk down the aisle at her wedding. Penny had already settled her claims against David. Her remaining theory was that Bull’s Bar should be held liable under Tennessee’s dram shop statute, and punished with punitive damages, for having served David before he drove.

What the live jury actually saw

The live jury saw something the AI “jurors” never got: a dynamic trial, not a script.

The structure was tight—essentially a mini-trial: a brief case introduction from the “judge,” plaintiff’s direct examination, my cross-examination, and closings from both sides. Even within that short format, the plaintiff’s testimony shifted the ground under our feet.

On direct, she added detail that was not in any pre-trial summary or report. Suddenly, David wasn’t just a drunk driver with a .13 and a dropped beer. He was “wobbly”, had “crazy hair”, and kept “hitting on her maid-of-honor.” The bar manager was now the person who brought her ice and made the decision to serve David a second beer after cleaning up his dropped and broken beer bottle, and despite him being “obviously drunk”. She also admitted that her own memory was “a bit fuzzy,” she couldn’t recall what time she got to the bar or whether she had one drink or four after leaving Bull’s and before the accident.

Those new details let me do several things in real time that the AI never saw:

- Undercut her credibility by contrasting her polished courtroom story with the more spare pre-trial descriptions.

- Emphasize that when the law requires proof “beyond a reasonable doubt,” late-breaking embellishments about David’s appearance should be treated with skepticism.

- Reframe the bar manager not as a “revenue first” villain, but as the person who both cleaned up the bottle and made sure Penny got ice and a place to sit—exactly what a safety-conscious operator would do.

In closing, I leaned into a simple theme—“It’s the car, not the bar”—and anchored it in three pillars: her choices, the waiver, and causation. She chose the party weekend, the bull, and the pedal tavern, and signed away any bull-ride claim. The only reason she couldn’t walk down the aisle was the leg fracture from a car she didn’t drive and from a driver she had already settled with.

The live jurors took that in along with everything else: demeanor, timing, tone, and the natural imperfections and adjustments that make a trial feel real.

What the AI “jury” actually saw

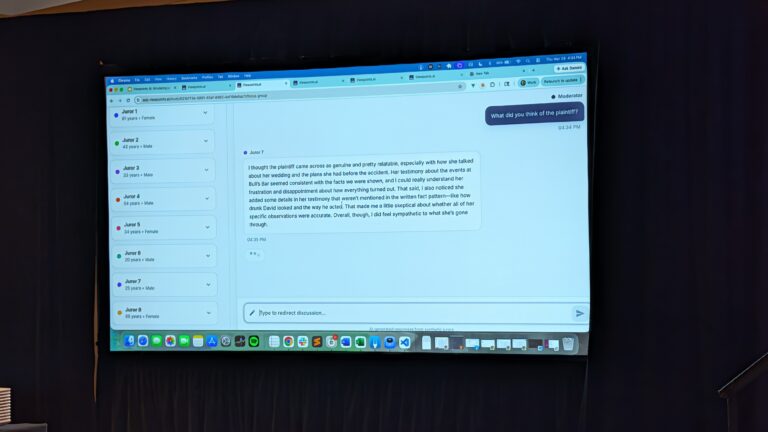

The AI experiment, run by ViewPoints AI, was on a different track altogether. ViewPoints builds large‑scale simulated “juries” using synthetic juror profiles to test how cases play on paper, not in the live courtroom. Because the AI “jury” never watched the mock trial, it didn’t truly “compete” with the live jury; it evaluated a different version of the case based on what we put on paper. That gap is the point of this exercise. For this seminar, the humans reacted to the case in the room, while the AI reacted to the case as we described it in advance.

In the weeks before the seminar, we submitted anticipated outlines of direct, cross, and closing. Those written materials—essentially a planned version of the case—were what the AI “jurors” evaluated. They never “watched” the mock trial, never heard the plaintiff say her “memory is a bit fuzzy,” never saw her expand her description of David, and never heard the live versions of either closing argument.

The AI system itself was sophisticated. Its designers built thousands of synthetic juror profiles using psychological research, demographic data, and even programmed flaws like uneven memory. After generating verdicts and damages ranges, the system allowed for individual “juror” interviews: What mattered most to you? What might have changed your mind? How did you weigh plaintiff’s decisions versus the bar’s conduct?

But all of that analysis rested on a frozen record. The most important human moments—the improvised cross questions, the emotional tone of testimony, the way a lawyer’s phrase lands in the room—never made it into the AI’s world.

Outcomes: similar direction, different path

Despite these differences, the bottom line on liability was broadly aligned. Both the live jury and the AI jury were simulations of how a real jury might respond; neither is “ground truth.” But taken together, they gave us two overlapping views of the same dram shop story.

The live jury ultimately voted 8–3 in favor of Bull’s on dram shop liability. Of the three jurors who would have found liability, two would have awarded just $5,000 against the bar; the third would have awarded $50,000. All three numbers were orders of magnitude below plaintiff’s ask of $500,000 in compensatories and $1 million in punitives.

The AI “jury,” working only from pre-trial materials, produced a modest majority for the defense as well. Among the AI “jurors” who did find liability, their median damages assessment was around the mid-six figures. Interestingly, some of the AI “jurors” fixated on clean doctrinal issues (statutory elements, causation language) more than on the emotional wedding narrative.

Put numerically, roughly 73% of the live jurors favored a defense verdict versus about 57% of the AI “jurors,” and the AI’s median damages for those who did find liability sat in the mid‑six figures.

The alignment on liability suggests that Tennessee’s heightened dram shop standard is a powerful anchor regardless of the decision-maker. The divergence on damages hints at what AI may be missing: the subtle cues that caused real jurors to view plaintiff’s “ruined wedding” story as something she could have mitigated by rescheduling, not as a life-defining tragedy deserving vast compensation.

What the live jurors taught us

The debrief with the live jurors was a reminder of why actual post-verdict conversations are so valuable.

They were not especially moved by “I can’t walk down the aisle,” “I can’t show off the back of my dress,” or “I won’t have a first dance.” Their reaction was pragmatic: several jurors said they might have awarded more if she’d made a genuine effort to adjust her plans rather than insist that a fixed date transformed her injuries into something unique.

They were sharply aware of missing pieces of evidence. They wanted to see the security video that supposedly showed David stumbling into the bar. One juror, who described herself as habitually clumsy, pointed out that stumbling through a doorway can mean many things unrelated to intoxication. They also expressed a desire to hear from the bar manager. If you’re going to frame him as the villain whose choices created dram shop liability, they expect to see him on the stand.

They also thought naturally in terms of other potential fault holders. More than one juror questioned why the pedal tavern operator had no seatbelts or restraints. Even though that entity wasn’t on the verdict form, the jurors instinctively wondered whether some share of the blame should go there.

Finally, they cared about advocacy style. They viewed plaintiff’s lawyer as highly prepared and professional but noted that she came across as relatively unemotional. Several jurors commented that my own approach—non-aggressive cross, short fact questions, clear structure—made me feel trustworthy and focused on “what mattered.” The jurors also openly acknowledged the gendered realities: a male lawyer can get away with a tone that might be perceived very differently if used by a woman in front of the same panel presenting, while my choice to take a more sympathetic tone even on cross, added credibility- even to the one juror who openly admitted she doesn’t like any men at all (she conceded that I wasn’t so bad!).

What the AI experiment really showed

AI juror simulations can be helpful during preparation. They can stress-test themes against a broad set of synthetic profiles, highlight how different combinations of facts and instructions might play, and force us to articulate our theories cleanly on paper. They can even provide structured, repeatable feedback that’s hard to get from a single focus group.

What they cannot yet do-especially when working from pre-trial scripts—is fully capture the most human aspects of trial practice:

- The way a witness’s off-script answer opens up a new line of impeachment.

- The subtle shift in tone when a juror visibly reacts to a particular fact.

- The strategic choice to elevate or drop a theme based on how the live testimony actually came in.

There’s another category an AI jury, focus group, or pre-trial mock jury can’t capture at all: how jurors react to the behavior of the people in the room. I’ve had a client make audible, sarcastic comments during trial—loud enough to draw not one, but two admonitions from the judge, the second in front of the jury. I’ve watched lawyers roll their eyes, smirk, and visibly bristle when a ruling goes against them. Live jurors notice every one of those moments; an AI “jury” reading a transcript can’t.

By design, the AI “jurors” in Nashville were blind to those real-time dynamics. They rendered verdicts on a record that experienced trial lawyers would recognize as “the outline we thought we were going to try,” not the case we actually tried.

The AI jury could have been given a live feed of the trial or a full video replay, but in this experiment, for practical reasons it wasn’t. It rendered judgment based only on what we thought we were going to do—our pre‑trial outlines and scripts—not on how the plaintiff actually testified, how the arguments evolved, or how the jurors reacted, just as any pre‑trial mock jury or focus group is limited to whatever record you can realistically create before trial. That choice raises the real question for litigators: if you let an AI “jury” into the courtroom to see what human jurors see, does it start to look more like them—or does it only expose, even more clearly, what machines, or lawyers, still miss about how trials really work?

I am very interested in finding out whether DRI and ViewPoints AI can arrange to run the video through the AI program.

The process did demonstrate a truism with all AI: we need to be precise about what question we ask it to answer. An AI “jury” can tell you how a scripted version of your case might land with a large, varied, simulated audience. It may be able to tell you how a real jury will respond to the living, breathing messiness of trial, but only if given that chance.

Practical takeaways

For me, the seminar reinforced a few practical points:

- High burdens of proof still matter. Explaining and leaning into the differential burdens—preponderance, beyond a reasonable doubt for dram shop liability under Tennessee law, clear and convincing for punitive damages—helped frame the defense for both human and AI evaluators.

- Short, memorable framing helps, but only if tied to evidence. “It’s the car, not the bar” showed up in juror questionnaires and seemed to shape how people thought about causation, even if they didn’t repeat the phrase in deliberations.

- Jurors expect you to show, not just tell. If you reference video or a key actor repeatedly, they notice when you don’t produce them.

- Tone and gender dynamics in advocacy remain very real. Controlled, fact-driven cross-examination can be as effective as (and often more acceptable than) a more aggressive style, depending on who is asking the questions.

- AI is a supplement, not a substitute. It can enhance preparation, illuminate blind spots, and broaden our view of potential juror reactions. The art of trial advocacy is still performed in front of human beings, in real time, with all the improvisation that entails.

The DRI mock trial didn’t give us a head-to-head contest between human and machine juries on the same performance. It did something more useful: it highlighted how different the “case on paper” is from the case in the courtroom and reminded us that while AI can help us refine the former, the latter still belongs squarely in the hands of live advocates and live jurors.